PH.D. PROJECTS

The following projects are selected projects that I have done as a research assistant at North Carolina State University.

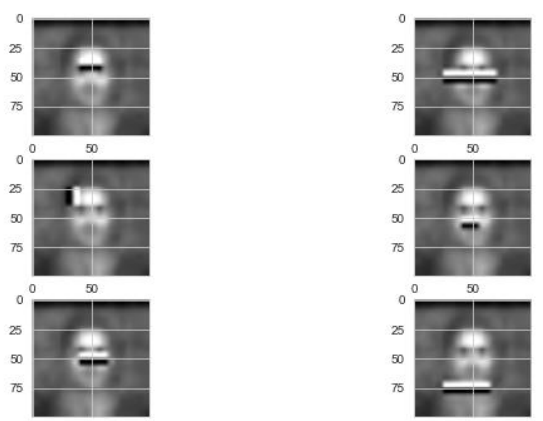

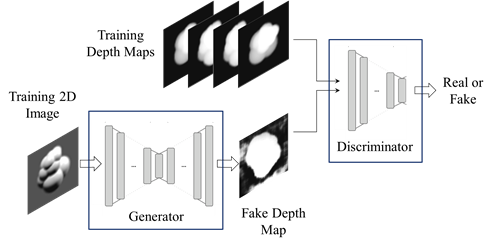

Virtual Manipulation

This project presented a case study that compares three manipulation hardware (image-based, infrared-based, and magnetic-based). Also, a snap-to-fit method is proposed that improves the immersion by solving limitations of the advanced

VR interaction metaphors. (J2 and C3)

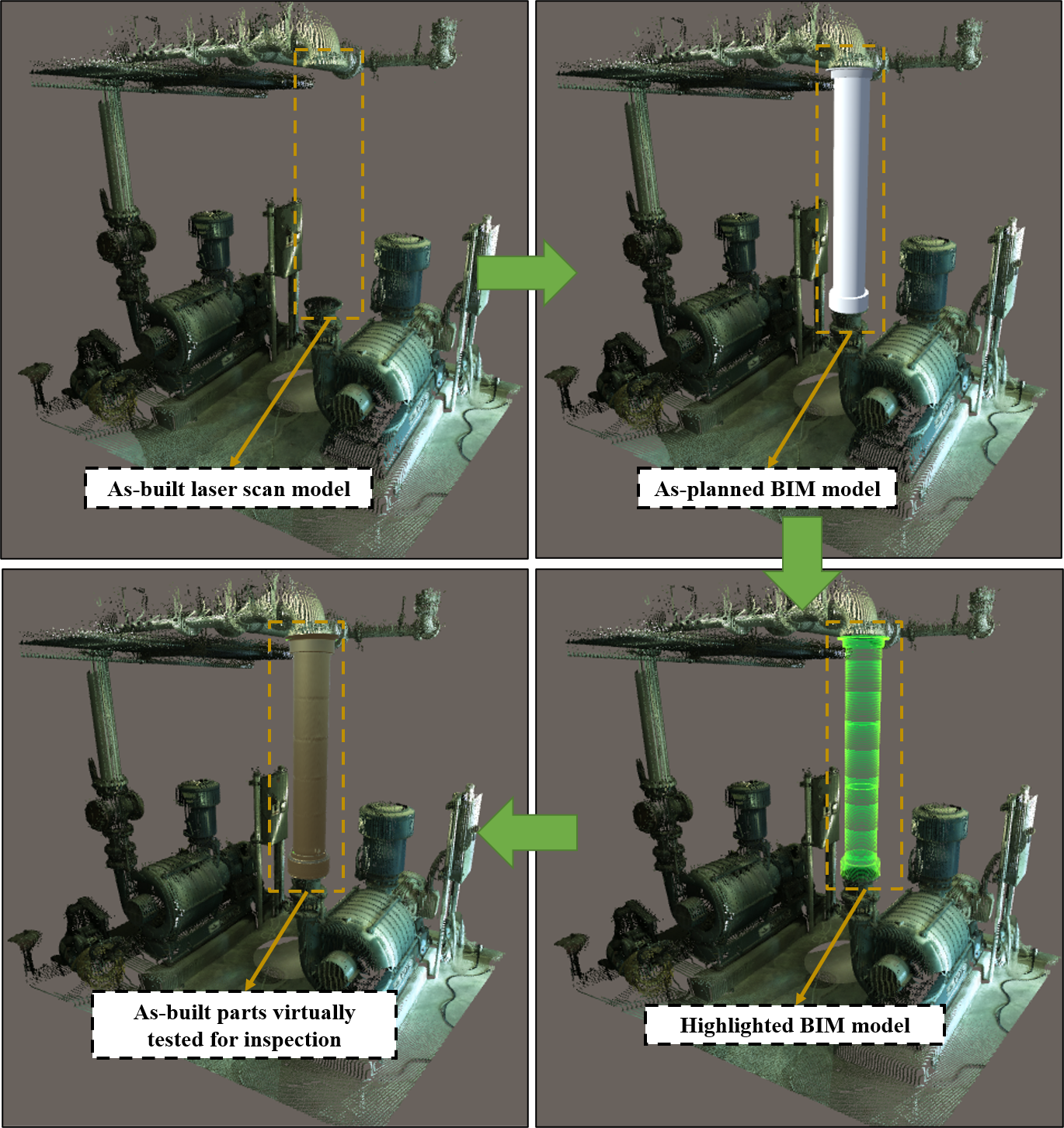

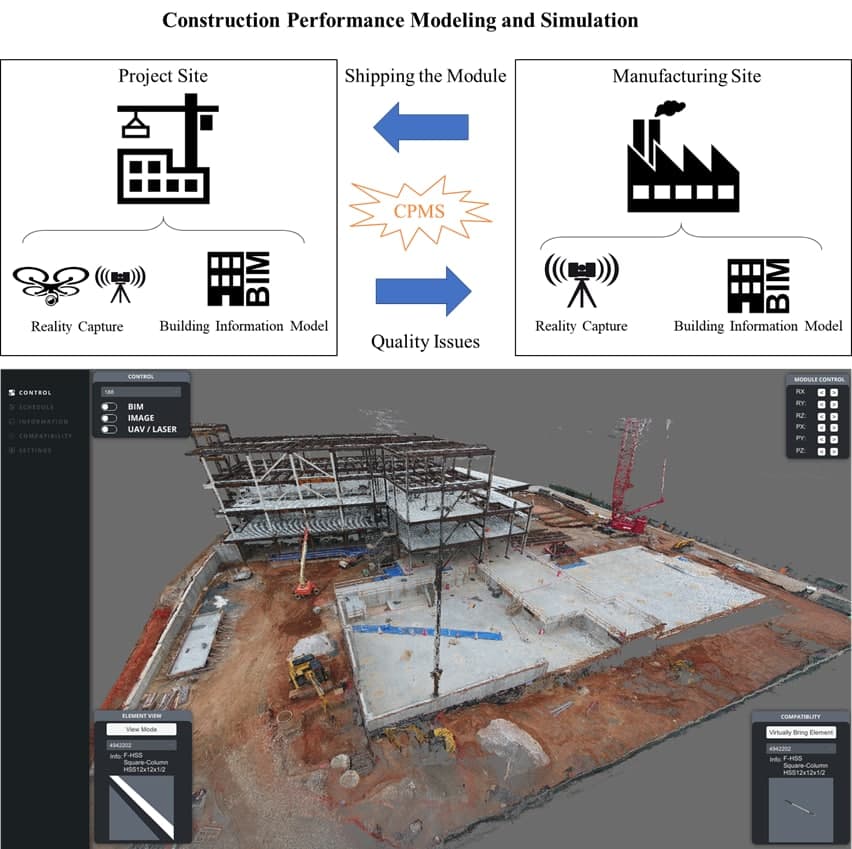

Construction Performance Modeling and Simulation

In this project, a compatibility checking platform was introduced (both for desktop and web) that is able to load point clouds and virtually check the compatibility of offsite modules. (P1, J2, and J7)

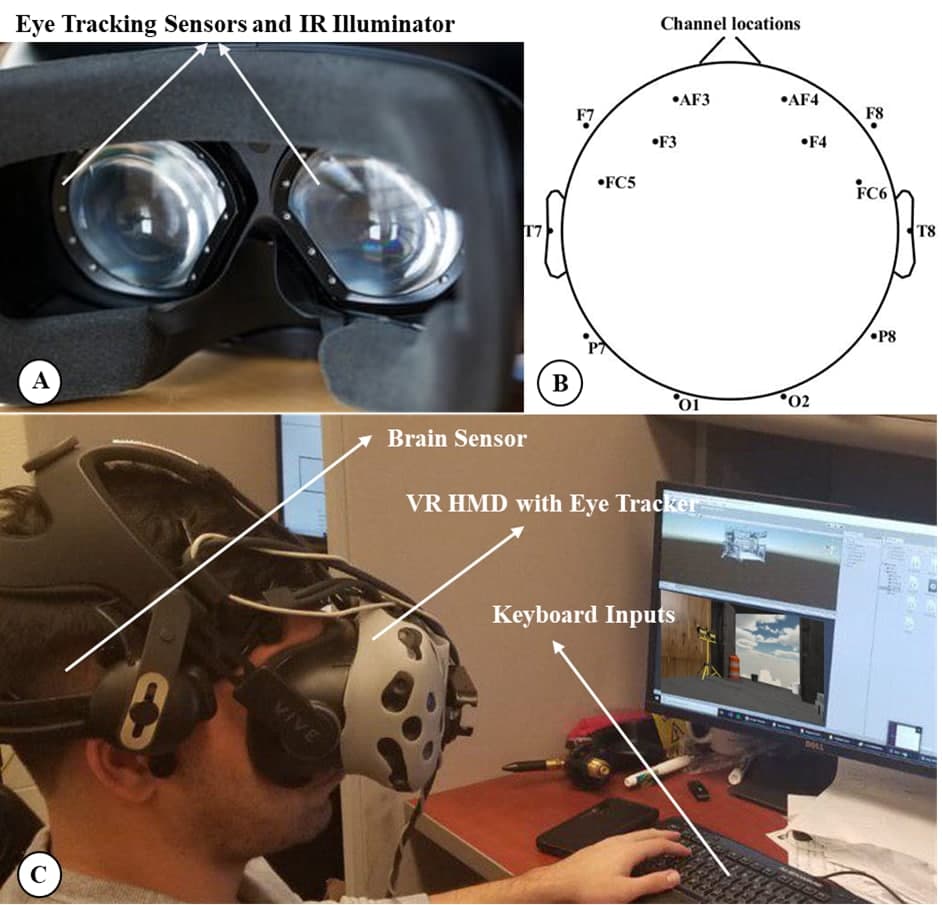

Hazard Recognition in VR

Proposed a platform can analyze the overall performance of the workers in a visual hazard recognition task and identify hazards that need additional intervention for each worker. This study provides novel insights on how a worker’s brain and eye act simultaneously during a visual hazard recognition process. (J1 and C1)

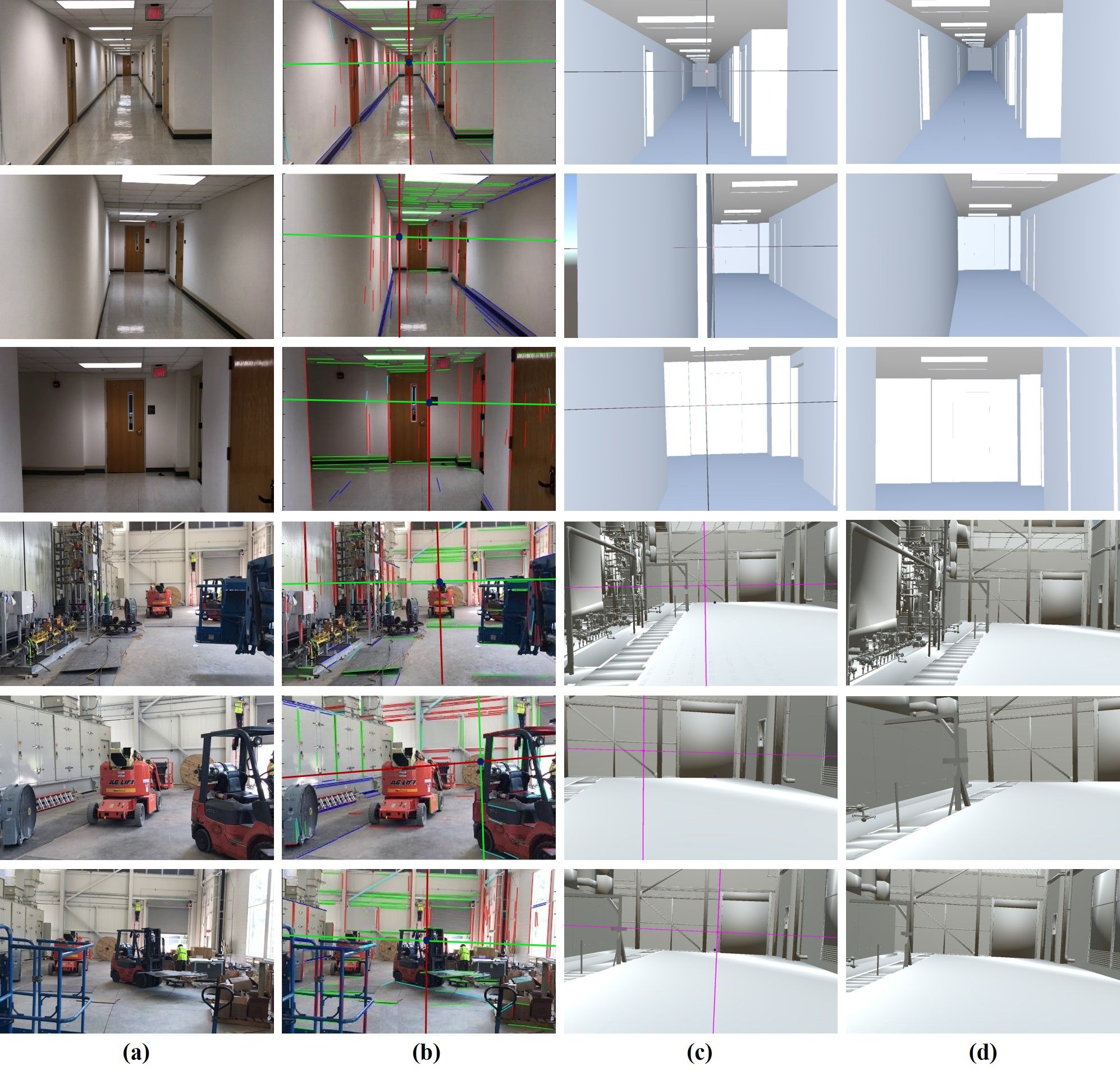

Perspective Alignment using BIM and SLAM

Proposed a method that discovers the camera poses of image frames in the BIM coordinate system by performing an augmented monocular simultaneous localization and mapping (SLAM) and perspective detecting and matching between the image frames and their corresponding BIM views. (J6)

Real-time Cost Estimation in VR

Proposed a method that can identify the influence of immersive virtual environments (IVEs) on project management, a systematic approach is proposed through which stakeholders can visualize and interact with 3D models in one-to-one scaled realistic virtual environments (fully immersive); and (2) visualize the dollar amount changes as the results of change orders. (J3, C5, and C6)

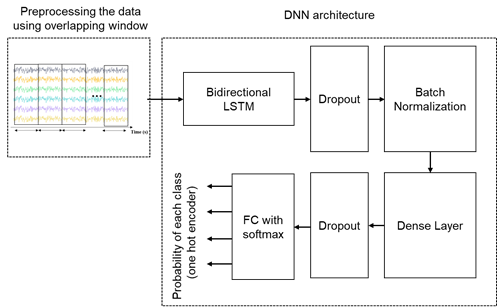

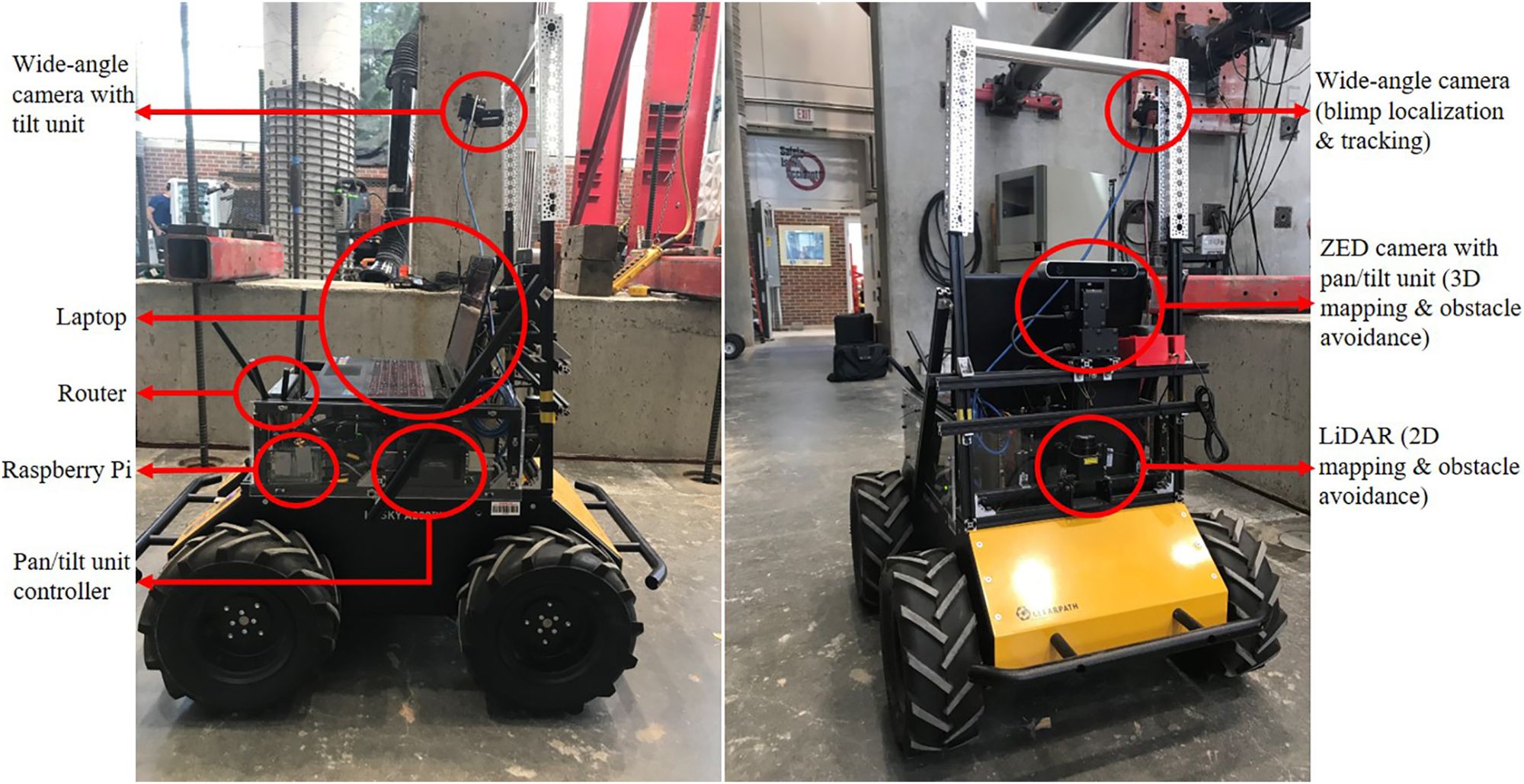

Vision-based Autonomous Robotic System

This project designs a UAV-UGV team that integrates two custom-built mobile robots. The UGV autonomously navigates through space, leveraging its sensors. The UAV acts as an external eye for the UGV, observing the scene from a vantage point that is inaccessible to the UGV. The relative pose of the UAV is estimated continuously, which allows it to maintain a fixed location that is relative to the UGV. (j5 and C4)